|

ImageOptim, an image optimization tool for OS X First, compress images using a lossy method, such as resizing images to sizes no bigger than necessary, then exporting them at a slightly lower quality without compromising too much (e.g. There are 2 ways to optimize images:įor best results I do both, and the order is important. You can also squeeze bytes out of images by optimizing them. However, browser compatibility may be an issue with modern CSS therefore, make good use of and enhance progressively. Use CSS instead of images when possible-there’s so much that can be done with CSS nowadays. SVG sprites look interesting and seem like they could be a viable solution to replacing common inline SVG icons I use throughout the site. In comparison, one page of the current site loads 10KB of inline SVGs-that’s a 93% difference. One page of the previous version of my site was loading 145KB in icon webfonts alone, and of the hundreds of icons in the webfonts, I was only utilizing a dozen of them. In addition, any images that could be drawn as vectors were placed with inline SVGs as well. I ditched icon fonts and replaced them with inline SVGs. Images typically make up the bulk of a website. Since then I managed to strip away even more (7KB compressed), and the script rounds out to only 0.365KB when compressed and gzipped. I shaved off 122KB by stripping away jQuery and rewriting it in vanilla JavaScript, which cut the file size down to 13KB minified. Of the 135KB of minified JavaScript, about 96KB was the jQuery library alone-71%! There wasn’t a lot that relied on jQuery, so I took the time to refactor my code. Refactoring some of my code to be less verbose (“navigation” to “nav”, “introduction” to “intro”) gave me some savings but wasn’t nearly as noticeable as before-vs-after compression, which I expected. I write CSS using the BEM (Block, Element, Modifier) methodology, and it can result in long, verbose class names. CSS and JavaScriptĬompressing/minifying your stylesheets and JavaScript files can noticeably decrease file sizes-I saved up to 56% from one file in compression alone. What Does My Site Cost? built by Tim Kadlec, is a wonderful tool to help you test and visualize what it costs to visit your site from around the world based on the weight of your site. I used these numbers as a reference and starting point to compare and find ways to cut weight where I could. The smaller the files, the faster they load. Now that I had found ways to minimize requests made, I began looking for ways to cut fat off the meat. like/tweet/share count) but ask the question: “Is the like count that important?” Compression/Optimization There may be a way to accomplish what it does without depending on the third-party. I wrote more on this matter on Responsible Social Share Links.Įvaluate each third-party script and determine its importance. It allowed me to strip away the third-party scripts I was using for sharing, which accounted for 7 requests. Instead, I went with “share-intent” URLs, which are basically links used to pass and construct data into a share and can be used to create social share links using just HTML. That’s an additional 14 HTTP requests and 203.2KB. A test environment with social sharing scripts from a handful of popular social sites shows that they quickly add up: Site

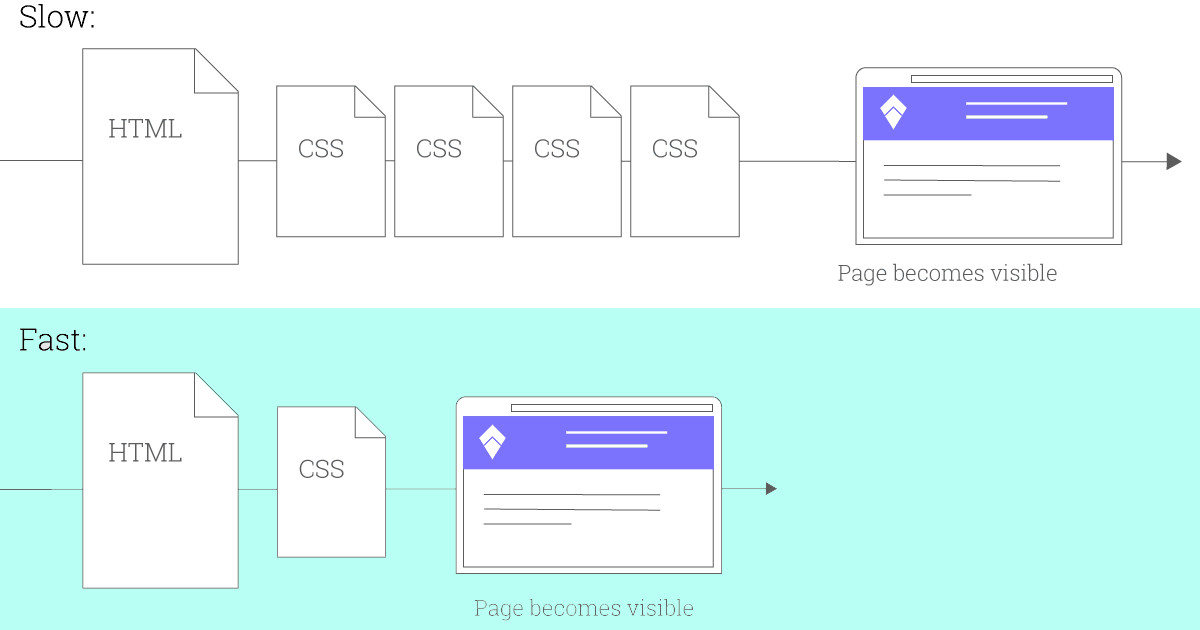

Google Chrome Developer Tools’ Network tabįor example, Facebook’s script makes 3 requests. Your browser’s built-in developer tools can help you sniff out the offenders. Third-party scripts are common culprits for making additional requests-many make more than 1 request to grab additional files such as scripts, images or CSS. using a build tool like Grunt or Gulp) is ideal, but at the very least should be done manually for production. One way is to compile or concatenate (combining/merging) CSS and JS into one file each.

Here’s what I did, why I did it, and the tools I used to optimize front-end performance on my site.Įvery asset required to render the page (external CSS or JS files, webfonts, images, etc.) as your site loads is an additional HTTP request. Since then my site has gone through another redesign, and although I made various workflow and server-side improvements, I gave front-end performance extra attention. Last year I wrote a post, Need for Speed, where I shared my workflows and techniques along with the tools involved in the development of my site. Need for Speed 2: Improving Front-End Performance

0 Comments

Leave a Reply. |

Details

AuthorWrite something about yourself. No need to be fancy, just an overview. ArchivesCategories |

RSS Feed

RSS Feed